Hardware and Software Understanding Delivers High-Performance Embedded AI

Artificial intelligence (AI) has become one of the key drivers of innovation. The high performance of cloud computing has made it possible to use AI to build smart agents that can take control and streamline important business processes

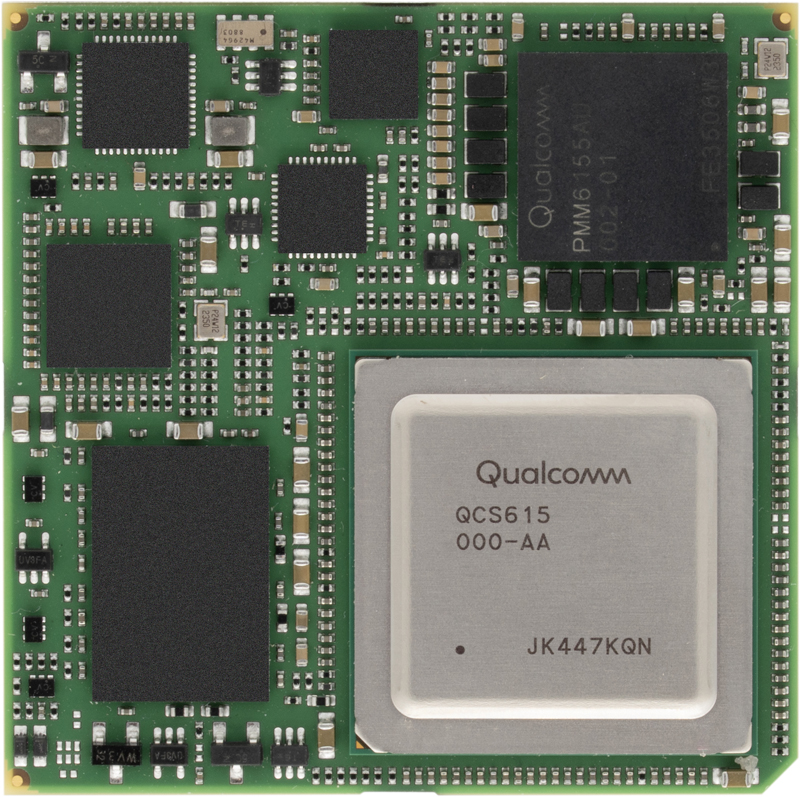

Figure 1: TRIA SM2S-QCS6490 - Compute Module by Tria

Developers and users of the embedded systems that control industrial and other real-time processes can use the cloud to embrace AI capabilities. But there is a growing need for local AI processing to overcome problems associated with the need for a persistent and unbroken connection to the cloud servers. Numerous semiconductor vendors have responded with dedicated AI accelerators, often incorporated into general-purpose multicore processors.

The performance of embedded accelerators is generally limited by the power and silicon area they can use. That implies a gap between the capabilities they can deliver compared to those available in the cloud. That gap becomes increasingly apparent with the trend towards large generative-AI models that now form the underpinning for most agentic use-cases, and which have made it possible to apply natural-languages user interfaces to applications.

Steady development in efficient AI has delivered technologies like MobileNet for image recognition, and which can power the models needed for applications in security, retail, logistics and industrial automation. A similar focus on size and computational efficiency, in which developers have taken advantage of the accuracy improvements that come with the use of larger training sets, is yielding generative AI implementations that can substitute for much larger models such as Llama2-7B. TinyLlama, for example, needs fewer than 3 billion parameters.

The development of more streamlined AI models has proceeded alongside hardware optimisations that can deliver high throughput on more constrained hardware. Qualcomm is one of the leading specialists in this area. Its team has performed extensive evaluations of techniques such as pruning and microscaling that can remove computational overhead. Microscaling, for example, replaces floating-point operations with more hardware-efficient integer arithmetic based on smaller operands. The recent acquisition of Edge Impulse, which specialised in tuning AI for low-power hardware, has augmented that work.

This work has given Qualcomm a great deal of insight into model optimisation techniques that are now extending into generative AI. Qualcomm’s engineering team was instrumental in refining the concept of speculative decoding as a way of improving the latency and efficiency of a large language model (LLM). The technique splits execution between a small local model and a cloud-based model in a way that speeds up overall execution.

Understanding of speculative decoding and other AI functions optimised for edge and embedded applications has informed the hardware architecture that Qualcomm has developed over the past decade. Implemented initially on the Snapdragon smartphone platform, this hardware now extends into industrial automation with the Dragonwing family.

Model tuning can only go so far when it comes to porting high-performance AI model to embedded platforms. The Snapdragon and Dragonwing processors close that gap. Where many competing solutions can deliver throughput of up to 10 trillion operations per second (TOPS), the IQ9 generation in Qualcomm’s family can deliver more than 100TOPS. That provides the capability not just to run TinyLlama and similar reduced-footprint LLMs but full Llama2 with 13 billion parameters. Those large models can run at a rate of over 10 tokens per second, allowing the use of local generative AI for natural-language interfaces.

Energy optimisation is another strength of the Hexagon architecture that provides the foundation of Dragonwing’s AI support. It delivers important optimisations that extend the operational life of battery-powered systems between charges. An example is micro-tile inferencing, which leverages the core architecture of the Hexagon coprocessor, which is organised around execution engines that share a common, central memory.

Click image to enlarge

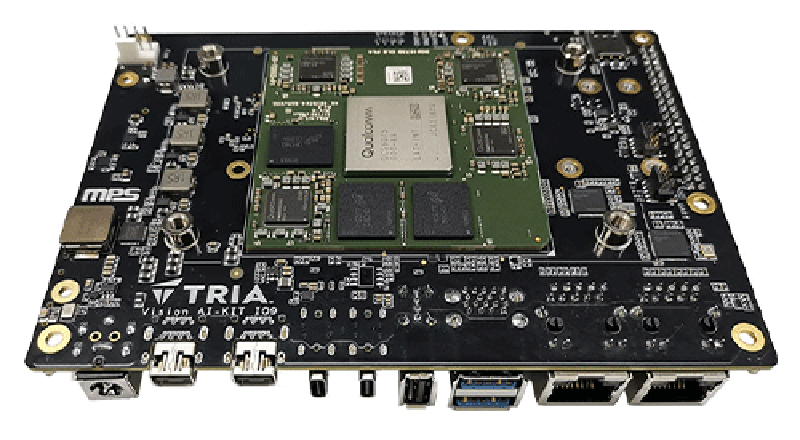

Figure 2: TRIA OSM-LF-IQ615 - Compute Module by Tria

Micro-tile inferencing lets a scaled-down model run for long periods in a low-energy state. That might be used to certain types of sound or movement on an image captured by a camera. That small model can then activate more capable tasks to evaluate the input. The common-memory architecture lets developers take full advantage of techniques like layer fusing that MobileNet and other models employ. By processing multiple layers at once, layer fusing reduces the number of accesses that need to be made to external memory. This results in large energy savings compared to other architectures and implementations.

The Hexagon execution engines include pipelines that are dedicated to scalar, vector and tensor arithmetic. This organisation lets software schedule tasks on the most appropriate part of the coprocessor to take full advantage of the acceleration capabilities. Throughput further increases with support for symmetric multithreading. This is a technique that leverages thread-level parallelism to hide the latency of accesses to external memory. Whenever a thread needs to wait for memory, another thread that already has the data it requires can run until it is forced to stall, ready for another to take over.

Hexagon includes a full scalar processor that can run Linux. This helps support the management of highly complex multi-model pipelines that can function without relying on the Arm application processors that the Dragonwing also incorporates.

The incorporation of Dragonwing processors by Tria in a family of system-on-module (SoM) products provides developers with easier access to this technology. For Qualcomm AI processors such as the QCS5430 and QCS6490, Tria opted to build the SoM boards around the popular Smart Mobility ARChitecture (SMARC) The use of SMARC provides developers with a family of AI-capable modules that can be used in products where size and space are at a premium, such as mobile robots.

To leverage the high performance of the IQ-9075, a key member of the IQ9 family, Tria developed a single-board computer (SBC) design for the 3.5in form factor that includes 36MB/s LPDDR5 memory and high-performance camera interfaces based on the MIPI standard. The SMARC-based modules let designers select from a range of Dragonwing-based designs based around the QCS5430, QCS6490 and IQ6 processors. Using the OSM format, a module built around the IQ6 focuses on designs that need a size-optimised AI platform. Boards built around the Snapdragon X Elite platform employ the larger ComExpress and ComHPC formats to allow for greater memory and I/O expansion and even more computing performance.

Click image to enlarge

Figure 3: Vision AI-KIT IQ9

A common feature of the Tria-designed boards lies in their thermally and electrically optimised design. The designers validated the behaviour of these modules in thermally constrained environments so that engineers who wish to use them need not guess at how they will perform under different conditions, such as running in direct sunlight when mounted on a pole. The Dragonwing-based boards offer long-lifecycle support of 13 years or more. Tria’s use of a modular design approach also allows scaling across product generations, allowing easier upgrades and the ability to take advantage of higher-performance replacements.

With a readymade hardware design suitable for integration into products, time to market gains a further boost from Qualcomm’s AI Hub. This software provides access to hundreds of different model implementations that have been optimised for the Snapdragon and Dragonwing platforms. Users simply need to select and download models to get up and running with AI, letting them try out different approaches to see which best fits the target application.

The result of the tie-up between Qualcomm and Tria is a combination of high-performance AI acceleration, a software infrastructure that provides access to a huge range of AI models and hardware support that lets developers evaluate, prototype and test concepts as quickly as possible. The platform provides users across a range of industries, including industrial automation, retail, security, logistics and utilities, with the means to take advantage of the latest advancements in AI.