IMU-Based Navigation Technologies for Autonomous Vehicles

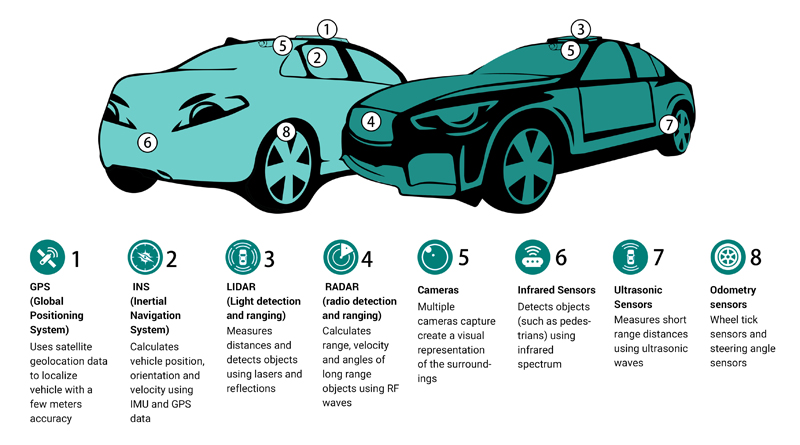

Figure 1. There are a wide variety of sensors and sensor types that can be used to capture and combine data to provide highly accurate navigation for autonomous vehicles

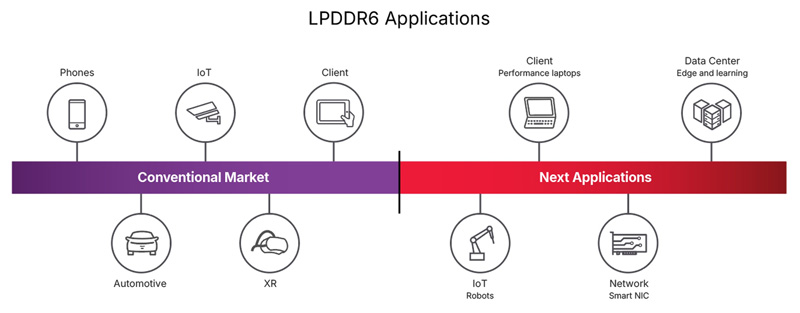

Sensing technologies for autonomous vehicles include perception sensors like Lidar, cameras, radar, and Inertial Measurement Units (IMUs), car to car communications, up-to-date maps delivered to the cars, as well as GNSS/GPS based navigation systems which utilize location information from satellites. In addition, real-time kinematic (RTK) technologies can provide sub-meter location accuracy – sometimes within centimeters - to assure that autonomous vehicles know exactly where they are, and more importantly, how to safely get to where they are going. Perception sensors are becoming increasingly critical additions to GNSS/RTK in order to accurately guide an autonomous vehicle in situations where there is little or no satellite signals from the GNSS network, such as in tunnels, parking structures, and urban canyons. here

Solving the challenge of achieving true precision navigation is considered so important that DARPA has ongoing active development efforts to improve navigation – to be able to determine exact location with limited or no GPS/GNSS coverage.

First, what exactly is Precision Navigation?

In the context of many of today’s mobile applications, precision navigation is the ability of autonomous vehicles to continuously know their absolute and relative position in 3D space, with high accuracy, repeatability, and confidence. Required for safe and efficient operation, positioning data also needs to be quickly available, cost effective, and unrestricted by geography.

Click image to enlarge

Figure 2. Highly precise navigation solutions need to be able to function well in adverse environmental conditions

There are important applications in numerous industries that require reliable methods of precise navigation and localization. Smart agriculture increasingly requires the use of autonomous or semi-autonomous equipment to increase hyper precision and productivity in cultivating and harvesting the world’s food supply. The impact of logistical efficiency in the warehousing and shipping/delivery industries has become apparent during a global pandemic – warehouse and freight robotics are only as effective as their ability to position and navigate themselves. Autonomous capability in long-haul and last mile delivery also necessitates precise navigation.

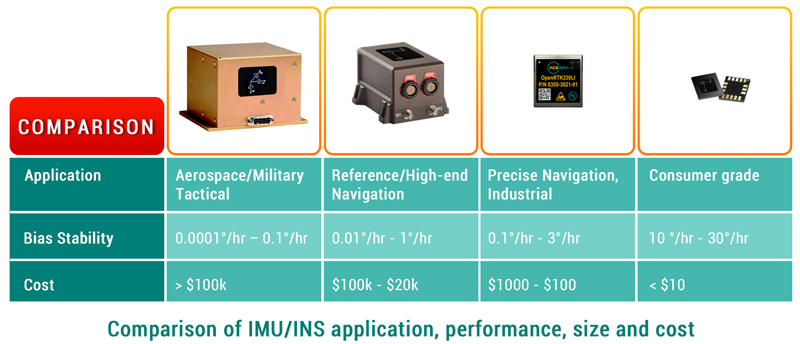

High precision solutions do exist today and are used in applications such as satellite navigation, commercial airplanes, and submarines. As mentioned earlier, these solutions come at a high cost ($100s of thousands) and large size (loaf of bread). The reason for the high cost and size of these inertial navigation systems (INS) lies primarily in the cost and size of the required high performance IMU, with the gyroscope performance being most critical.

IMU technology has progressively reduced the size and cost of hardware, from bulky gimbal gyroscopes to fiber-optic gyroscopes (FOG), to today’s tiny micro electro-mechanical systems (MEMS) sensors. As modern autonomous vehicle applications increase in complexity and sophistication, including the integration of artificial intelligence (AI) and machine learning (ML) to improve decision making and safety, the cost of the INS software development and integration needs to be factored in.

Click image to enlarge

Figure 3. Inertial navigation systems and sensors range in performance, size, and cost. Even though originally developed for specialized solutions, all are now found in automotive applications

What is Holding Back the Self Driving Car Industry?

When will autonomous, self-driving cars for consumers actually become safe and reliable enough for daily use? Car manufacturers have been promising self-driving cars on our streets for decades but aside for a few small test areas, still have not delivered. Why?

The key to mass market acceptance of autonomous vehicles is successfully providing safe and highly accurate, avionics-level location performance while simultaneously bringing down cost and size. The highly precise IMU based navigation systems used in aviation and space vehicles can cost a hundred thousand dollars or more. In comparison, the navigation IMUs used in smart phones and other devices cost just a few cents. In addition, avionic-quality IMU systems are quite bulky, while the IMUs used in phones and mobile devices are tiny and can fit on a small circuit board.

Click image to enlarge

Figure 4. The ACEINNA OpenRTK330LI module measures just 31 x 34 x 5 mm

The automobile industry is waiting for an affordable navigation solution that provides avionic- level accuracy.

How RTK Can Improve GPS/GNSS Accuracy

GPS/GNSS technologies are not accurate enough to be used for fast moving autonomous cars. Issues such as atmospheric interference and outdated satellite orbital path data can be corrected to some extent, providing in the best case, positioning accuracy of around a couple meters. However, when compounded with multi-path errors from tall buildings in urban areas, and/or poor coverage in other areas, the receiver’s margin of error increases and positioning accuracy decreases.

Click image to enlarge

Figure 5. Because of multi-path errors and reflections from tall buildings in urban areas, satellite provided positioning is often inaccurate. This becomes even more critical when autonomous vehicles need to make complicated moves, such as making a tight left turn in a city

This margin of error is OK for basic road-level accuracy, for example knowing which road a vehicle is probably on. Higher precision navigation aims for lane-level accuracy or better..

Imagine a person driving down a road. Without having to try very hard, most drivers can observe and safely maneuver around obstacles such as pedestrians, bicyclists, potholes, without veering out of their lane. Every day, throughout the world, in rain, snow and dust, and even with confusing road signs, drivers can traverse complex intersections. Humans use multiple senses of perception (including depth perception), with accumulated past experiences and practice, to perform the seemingly simple task of driving a vehicle.

In a similar way, autonomous and semi-autonomous vehicles use a suite of perception sensors, in conjunction with navigation solutions, to safely maneuver from one point to another.

Because of the existing limitations in GPS/GNSS and inertial navigation systems requiring tradeoffs between performance, cost and size, autonomous vehicle systems must employ additional methods to enhance positioning accuracy.

To enable a vehicle to safely navigate in a complex, dynamic environment with minimal human intervention, lane-level or centimeter-level positioning accuracy is critical for L2 and higher levels of Advanced Driver Assistance Systems (ADAS).

One popular method of localization is to use image and depth data from LiDAR and cameras, combined with HD maps, to calculate vehicle position in real time with reference to known static landmarks and objects. HD maps are a powerful tool that contain massive amounts of data including road and lane-level details and semantics, dynamic behavioral data, traffic data, and much more. Camera images and/or 3D point clouds are layered on and cross-referenced to HD map data in order for a vehicle to make maneuvering decisions for vehicle control.

This method of localization, while effective, has its challenges. HD maps are data intensive, expensive to generate at scale, and must be constantly updated. Perception sensors are prone to environmental interference which compromises quality of data.

As the number of automated test vehicle fleets on the road increases, larger data sets of real-world driving scenarios are generated and predictive modeling for localization is becoming more robust. However, it comes at the high cost of expensive sensors, computational power, algorithms, high operational maintenance, and terabytes of data collection and processing.

There is an opportunity to improve both the accuracy and integrity of localization methodologies for precise navigation by incorporating real-time kinematic (RTK) into an INS solution. RTK is a technique and service that corrects for errors and ambiguities in GPS/GNSS data to enable centimeter-level accuracy. RTK works with a network of fixed base stations that transmit correction data over the air to the moving rovers. Each moving rover then integrates this data in their INS positioning engine to calculate a position in real time, which can achieve accuracy up to 1 cm + 1ppm, even without any additional sensor fusion, and with very short convergence times.

Integrating RTK into an INS and sensor fusion architecture is relatively straightforward and does not require heavy use of system resources. In moving vehicles, RTK does require connectivity and GNSS coverage to enable the most precise navigation, but even in the case of an outage, a system can employ dead reckoning and the use of a high performance IMU for continued safe operation.

The benefits of RTK serve to bolster visual localization methodologies by providing precise absolute position. The RTK data can be used by the localization engine to reduce ambiguities and validate temporal and contextual estimations.

Conclusion

Precise navigation capability is at the core of countless modern applications and industries that strive to make our daily lives better – autonomous vehicles, micro-mobility, smart agriculture, construction, and surveying. If a machine can move, it is vital to measure and control its motion with accuracy and certainty.

Inertial sensors are essential for measuring motion and GPS/GNSS provides valuable contextual awareness about location in 3D space. Adding RTK to the calculation can greatly increase overall navigation accuracy. Vision sensors enable depth perception, which provides even better recognition of the environment, including changes that have occurred since the latest download of maps.

Data from these various sensors and technologies are combined to enable confidence in navigational planning and decision making, to provide outcomes that are safe, precise, and predictable.