Solving Key Power Challenges for AI and Supercomputing

A new architecture may be the key to providing higher overall density and power system efficiency for AI systems, supercomputers and cloud datacentres

Numerous forums have allowed us to connect with customers and industry innovators in the computing domain, featuring events including the inaugural AI Hardware Summit and Supercomputing 2019 (SC19), alongside datacenter-focused events like the Open Compute Project (OCP) Global Summit and the Open Data Center Committee (ODCC) Summit. Each of these events yielded eye-opening perspective on how AI, supercomputing and cloud datacenter vendors are approaching the key challenges associated with maximizing processing performance and power efficiency.

In the AI domain, brute force processing power is required to tackle what’s commonly understood to be the most compute-intensive challenges of the modern era. This is being achieved through the implementation of increasingly higher power processors and ever larger memory resources in clustered architectures that reduce the latency between onboard compute engines.

This is driving innovation from companies working in the area. Vicor has been working closely with one such company that has recently launched a new AI processor, which is widely regarded as the most powerful processor in AI today. It is comprised of more than 80 processing cells that span an entire wafer and yet still function as a single chip. The AI processor dramatically reduces the latency associated with a traditional singulated, socket-based chip architecture.

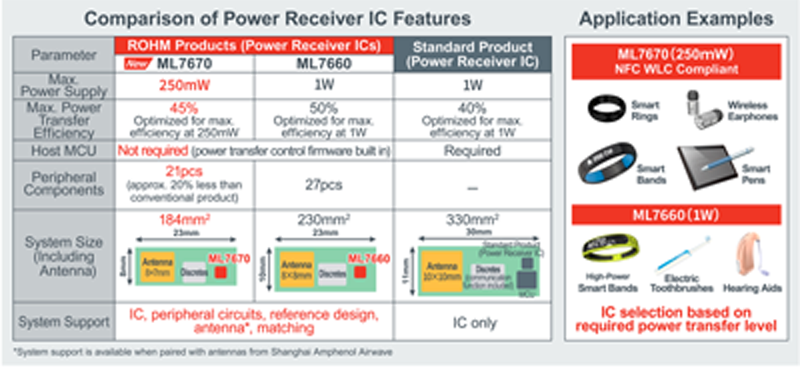

Click image to enlarge

The new chip is rated at a massive 15kW – an order of magnitude greater that legacy processors. It also requires an advanced power architecture whereby power is applied uniformly to each cell at extremely high currents. To achieve this,the company worked closely with Vicor to implement a Vertical Power Delivery (VPD) architecture that foregoes traditional space-consuming routing patterns on the substrate in favor of a VPD approach that reduces power delivery network (PDN) resistance by more than 50%, thereby achieving higher overall density and power system efficiency. For the processor and beyond, VPD shows the way forward for higher power delivery in tightly clustered processor configurations.

Of course, the demand for increasingly higher computational power isn’t in itself new for scale-out computing infrastructure. But historically in the cloud datacenter domain, mainstream processors have been designed to help limit power envelopes so that server racks can be cooled economically with air cooling techniques. In many ways, the main limitation of producing an economical server owes to the focused attention on limiting processor power to a manageable threshold of roughly 200 or less watts. But with the advent of AI, there’s been a growing acceptance of advanced liquid cooling and even immersion cooling – not just in HPC, but in the cloud datacenter as well.

The trend to higher power processing across the board is perhaps best exemplified with the 2019 launch of the OCP Accelerator Module (OAM), an open-hardware compute accelerator specification contributed to the OCP via a collaboration spearheaded by Intel and AMD, with considerable backing among other industry leaders such as Facebook, Microsoft and Baidu. The OAM spec is targeted to speed the adoption of new AI accelerators by reducing the implementation challenges and design complexities associated with proprietary AI hardware systems. Several cloud computing providers are already deploying these AI-class OAM cards in datacenters today.

Click image to enlarge

The introduction of the OAM has spurred a lot of industry discussion exploring the idea of dedicated AI racks in the datacenter, and Facebook has publicly disclosed their plans in this regard. Many of the suppliers in this domain are taking a close look at immersion-cooled AI racks targeted for cloud datacenters – an idea that would have been unthinkable just two years ago.

The evolution to 48V

Another interesting aspect of the OAM is that it’s designed to support both 12V and 48V, accommodating the lingering demand for legacy 12V datacenter infrastructure while simultaneously supporting the needs of forward-looking 48V adopters. We anticipate that the majority of OAM customers are designing for 48V, however, in order to achieve the added power boost compared to 12V.

These customers are guided in part by the 48V server and distribution infrastructure standard previously introduced by the OCP, with support from Google. Compared to legacy 12V architectures, 48V bus architectures enable system engineers to implement system designs that provide higher conversion efficiency, higher power density and lower distribution loss. At the macro level, 48V datacenter server infrastructure can reduce energy losses by over 30%, so it’s easy to see why cloud datacenter providers are accelerating their efforts to shift from 12V to 48V.

In the meantime, cloud providers leveraging 12V infrastructure have the option to deploy non-isolated step up converters that convert 12V to 48V with over 98% peak efficiency. This gives them newfound flexibility to take advantage of next generation AI cards as they navigate the transition to 48V power distribution. The recent emergence of bidirectional 48V/12V converters has added an extra layer of flexibility for cloud datacenter providers targeting to support either or both standards as their infrastructure evolves.

These trends – and many others – will be top of mind among the AI, supercomputing and cloud datacenter communities as we head into 2020. Where previously they were regarded as entirely distinct entities, recent history has shown us that when it comes to power and cooling, they have more in common than previously thought.