Solving the Critical End of (Battery) Life for IoT Devices

Accurate measurements and event-based power analysis give the fast insights necessary to make battery life decisions.

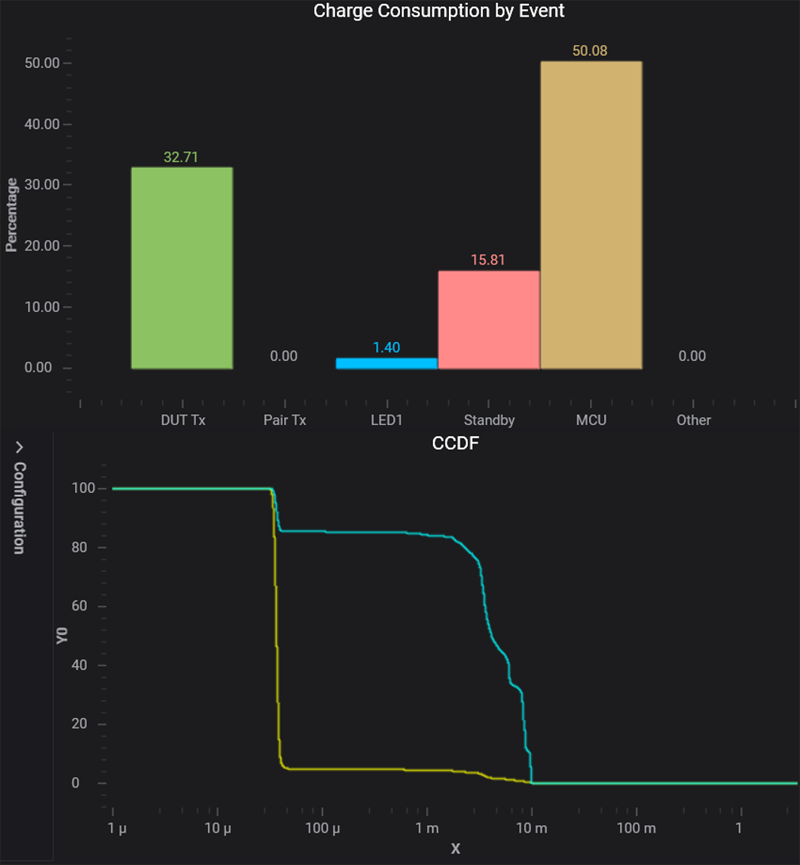

Figure 1: Event-based power analysis on Keysight X8712A IoT device battery life optimization solution

"My battery is low and it's getting dark” was the slightly-massaged final message sent from Oppy, NASA’s Mars rover to mission control in February of this year, causing a worldwide social media sensation. It’s an issue that will be increasingly common over the next decade, as billions of IoT devices get deployed in application areas including smart cities, agriculture, energy, environment, smart homes, connected cars, smart buildings, and wireless medical devices. The overwhelming majority will be powered by small non-rechargeable, non-replaceable batteries, giving a finite working life to those devices. While their eventual end-of-life won’t attract the same global attention as the demise of the Mars rover, the IoT device manufacturers that make the right decisions about efficient device operation towards, and at the end of battery life in their products will be best positioned to delight their customers and gain market share through competitive advantage.

Live long: Why battery life is key

For many IoT applications, optimizing battery life is important for several reasons. The most obvious reason is that customers prefer long operating life; device vendors who demonstrate superiority in this area will have a key competitive advantage that drives market share growth. In some cases, a long battery life may be essential to a project’s economic viability, and early failures of sensors and actuators may lead to unacceptably high replacement costs. If these costs are covered under warranty, the financial risk to the manufacturer may be extremely high.

There are also environmental issues associated with batteries, and proper disposal costs both time and money. Furthermore, some devices are installed in locations that are difficult to access, so the labor costs of battery replacement are considerable and not realistic. If the battery is in an implanted medical device, the cost and risk go up exponentially, to say nothing of the legal and human costs associated with failed medical devices.

To understand how products consume battery power, manufacturers use various tools to perform battery drain analysis (BDA). For simple devices that consume power at a steady rate, a digital multimeter (DMM) may suffice. For complex devices, such as cell phones and IoT devices, the current waveforms are highly variable, with fast switching between operational modes in the tens or hundreds of milliamps and sleep modes measured in micro- or nano-amps. To meet this challenge, modern BDA instruments must have good measurement bandwidth and seamless ranging to avoid losing data during measurement range changes.

Beyond battery drain analysis to event-based power analysis

To make the appropriate design enhancements, design engineers also must know what is causing the charge consumption. This is particularly important for IoT device designers, because the IoT evolves quickly and product development cycles are very tight. Design and validation engineers must get quick insights into how and why their devices consume power, so correlating charge consumption with RF and sub-circuit events is key.

Many engineers find that event-based power analysis quickly reveals what events they need to focus on to improve their device’s charge consumption behavior. For example, event-based power analysis software can automatically analyze a waveform to produce fast, graphical insights, as shown in Figure 1.

In this case, the user can quickly see that the events labeled MCU and DUT Tx are consuming more than 80% of the device’s power (bar chart), and that most of the charge is consumed at rates between 5 and 10 mA (CCDF chart, bottom). Such insights allow the design engineer to make intelligent architectural, hardware configuration, and firmware programming choices. The engineer can also quickly re-test the device to see the effects of the changes.

Eventually, of course, the device’s battery will near the end of its charge, and what was once a steady 3.3-V battery (for example) will deliver only 3.2 V, then 3.1 V, and so on until it fails completely, often in a mode that turns out to be surprising. Instead of simply letting the device operate normally until the battery fails, you can give your product a competitive edge by carefully answering the following questions:

· How does the device behave as voltage drops?

· How does this affect charge consumption?

· Where is the critical point?

· What would customers want you do about it?

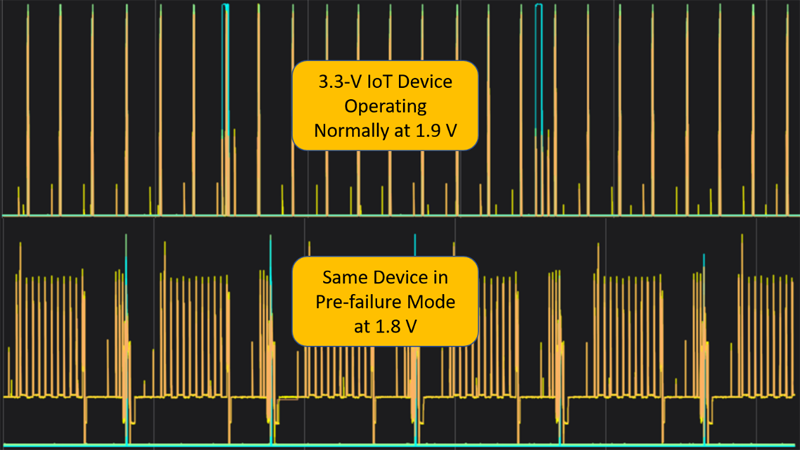

As an example, consider an event-based power analysis performed on an IoT sensor device normally operated at 3.3 V. Successive measurements were taken at 3.3 V, 3.2 V, 3.1 V, and so on down to 1.8 V. To normalize the data, the 3.3-V charge consumption was set to 100%, and subsequent measurements are shown relative to that 100% value.

Click image to enlarge

Figure 2: Relative charge consumption of a single IoT sensor at various voltage levels

Charge consumption was flat until approximately 2.9 V, at which point it began to climb slowly. There was a large increase between 2.3 and 2.2 V, and between 1.9 and 1.8 V the charge consumption jumped by a factor of 10. The charge consumed at 1.8 V is omitted to show the detail between 3.3 and 1.9 V.

The reason for the large charge consumption increase from 1.9 to 1.8 V is obvious from the current profiles. The device operates normally at a supply voltage of 1.9 V, but it goes into a repetitive fail and retry loop at 1.8 V, which ironically consumes charge at the greatest rate when the battery can least afford it. This is analogous to how a car with a new battery starts up with little charge consumed, but a weak battery struggles to power the starter and soon fails completely.

Of course, this is only one example. A device’s behavior depends on its battery technology, its power supply and on-board converters, its power management system, the physical environment in which it operates, and other factors. Measuring the device at various voltage levels is critical to knowing how it will behave.

Once you know how the device behaves at low voltage levels, you can either allow the device to operate in the usual manner until it fails or design it to degrade gracefully. Allowing it to operate in the usual manner until failure requires no additional firmware or voltage measurement circuitry. Designing a device to degrade gracefully requires appropriate firmware and voltage measurement circuitry (which may already be included on the device’s MCU or transceiver module), but it provides a longer useful product life, which may delight customers and build market share.

You have several options for gracefully degrading a device’s performance that can extend its battery life while only marginally reducing its usefulness. For example, consider an IoT sensor. Perhaps the device can measure fewer physical quantities; they may not all be equally important to customers. Perhaps the device can report data less often than usual, which would also tell customers that the battery is nearing end of life. Perhaps the device could take measurements over shorter measurement windows, which would trade off a bit of accuracy for longer battery life. In a process control application, the accuracy needed for a process that has been running within limits for a long time may not be as important as it was initially.

Click image to enlarge

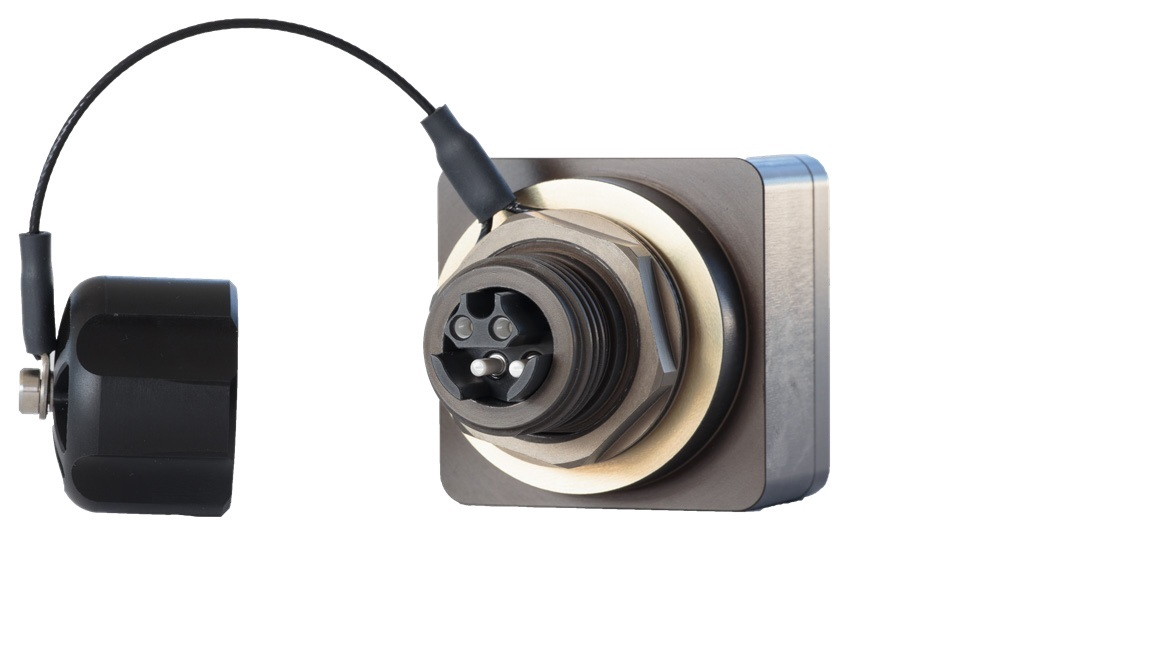

Figure 3: IoT sensor operating at 1.9 and 1.8 V, shown on Keysight X8712 IoT device battery life optimization solution

The bottom line is that no “right” answer always applies; good end-of-battery life decisions require solid engineering judgment and creativity. But by taking the appropriate measurements and performing event-based power analysis to get fast insights, you can quickly iterate your design and firmware to provide real value to your customers and give your device a competitive edge.

Keysight Technologies