With consumer paranoia about security at an all-time high, does automotive software development need to up its game in the quality stakes? The recent collaboration between automotive manufacturers and internet providers in the creation of the autonomous vehicle has sparked great consumer interest in the development of the car that will one day deliver functionality talked about in mid-20th century science fiction.

The initial enthusiasm has been tempered by the reality that connecting a car to the internet has inherent risks. On further consideration, is this paranoia about internet connectivity the real issue at hand and what questions does this raise about automotive software quality in general?

Exploiting weakness

We are all familiar with the regular headline stories detailing security breaches and hacking witnessed on our other connected devices such as phones, tablets and PCs. By connecting cars to the internet, hackers can and will exploit the same class of vulnerabilities including lack of encryption and recording and cloning of key presses. Likewise, these vulnerabilities can easily be detected and prevented in a similar way with the appropriate software testing, scripting and manual attacks (see Figure 1).

Click image to enlarge

Figure 1: The multiple systems in a connected car provide opportunities for hackers to exploit vulnerabilities

These exploitations are carried out by perpetrators with varying motives and are therefore difficult to protect against every instance. Malicious hackers will use internet connections to break into car subsystems but this is a small risk compared to poor embedded system quality. Car hacking is an individual crime in comparison to having 100,000 cars with the potential to unexpectedly accelerate, which is a much bigger issue. The recent Mitsubishi Outlander Wi-Fi hotspot hack is a great example.

While internet connectivity security risks are a very visible and consumers are understandably concerned, there is a more serious software quality issue to deal with before we focus too much thought on hopping into our connected cars of the future. We have seen fundamental flaws with some of the latest software heavy automotive platforms that might hold us back on the Sci-Fi vision.

Leading brands have publicly discovered quality issues in their operating software creating huge recalls and in some cases accidents or near misses, Toyota (drive by wire Throttle), Jeep (dashboard hack). Software updates have also caused issues either in the dealership or over-the-air to the user based command and control systems with Lexus (sat navigation upgrade) and Tesla (self-drive software) making mainstream news.

Safety first

Integral to the autonomous car vision is the fundamental notion that everyone should feel secure in their car and by feel secure we mean that there should be no unexpected behavior for the command and control systems deployed - when we change the radio station or open the window we don’t expect to car to accelerate or brake of its own accord or to behave abnormally in any respect. Before the move to autonomous can be complete, there is still a responsibility to make cars safe and secure in the way the behave and this responsibility falls to the embedded software in the main.

We are familiar with software and security testing of internet based applications to ensure that their vulnerabilities are detected and patched, but conducting software testing on a safety critical system like a fly-by-wire throttle, adaptive cruise control and anti-lock braking, is a different matter. To put it into context, by comparison there’s approximately 12 million lines of code in the Android operating system but over 100 million lines of code in an average 4 door car.

While it’s generally acceptable today to deploy your average Minimum Viable Product (MVP internet/mobile application with at least 70% testing thoroughness (code coverage) with many areas of the code not having been executed/exercised, which is its residual technical debt. By comparison the software in a car with 95% code coverage will have tested 95 million of 100 million lines of code or 8 times more lines of code than the internet application.

Checking code

Static analysis as a tool for checking automotive software code began life in the early 1970’s and has become a pillar of software testing and produces output by parsing the code and comparing it to a dictionary and best practice rules without executing it. One of the main challenges with using static analysis is the number of false positives (noise) produced.

As static analysis doesn’t execute the code, it falls to developers to use unit testing. Unit testing ensures that a unit of software behaves exactly as specified in its formal design and requirements documentation, with test cases written to test every execution path and to eliminate any false positives. Today, there are hundreds if not thousands of pieces of software from a huge global supply chain that must be integrated to create a complete automotive system.

To cope with the heightened pressures to create high quality, safe software the automotive industry examined other industries for solutions and went on to create standards that mirrored the best practices of the aerospace and rail industries. This effort has resulted in several standards (AUTOSPICE, AUTOSAR, ISO26262 & MISRA) emerging to satisfy the increase in safety legislation that ensures software meets the highest standard needed to keep driver, other road users and pedestrians safe.

While these standards are necessary, in practice this presents a huge challenge for software developers, as using the more common static analysis testing approach does not offer enough reliability. Using static analysis for such a large task will produce a mass of output, including false positives that will need to be interpreted by the developer who will use their experience to determine the best course of action to resolve and then build the necessary tests to ensure the error is corrected.

In the well documented Toyota Unintended Acceleration case when engineers checked the engine control application, they found more than 85,000 violations against MISRA’s 2004 edition which included Buffer Overflows, Invalid pointers, stack overflows and others. While it demonstrates the effect of poor coding and quality standards, it also highlights the vastness of the task for testing automotive software components. By MISRA’s own rules there is an industry recognized metric that equates the number of broken rules to number of bugs - for every 30 rule violations you can expect to see on average, three minor bugs and one major bug.

It’s a time-consuming effort and once a potential exploitation method or vulnerability has been investigated and documented it requires the support of the tool vendor to include the process heuristic into its analysis algorithms. Therefore, it’s difficult for any single purpose off-the-shelf package to catch all the different types of security and safety vulnerabilities that can be found it code, with teams needing to deploy a suite of tools to make sure they are achieving the highest degree of testing completeness including static analysis, unit testing and code coverage.

Now that automotive software developers have a bigger legal obligation to carry out comprehensive and thorough testing of all software components, what’s needed in the future is a platform that can analyze the efforts needed to meet industry testing requirements/regulations and produce “actionable intelligence”.

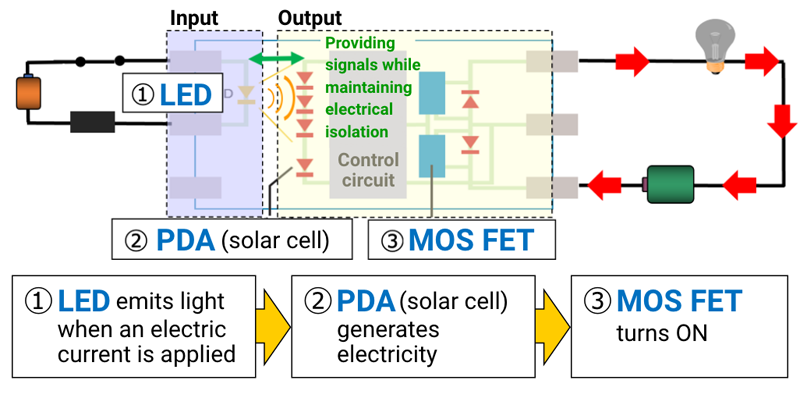

Such a system will use static analysis as an indicator of potential deviation from the standard requirements. It would then automatically build and run all the necessary unit test cases required to isolate potential coding errors. With the ever-increasing size of source code bases and a drive towards a DevOps approach, software testing needs to be continuous and quickly highlight areas of code that need attention (see Figure 2). What’s needed is a testing system that makes the obligation for producing fail safe software a little more bearable and lets us feel secure in our cars.

Click image to enlarge

Figure 2: Continuous Test Process

Developers and testers of safety critical software are unsung heroes who don’t usually get as much recognition as those working on those cool mobile or internet apps but they have a significant role to play in our everyday safety as we go about our business be it by train, plane or automobile.