We’ve been dreaming of gesture recognition for years

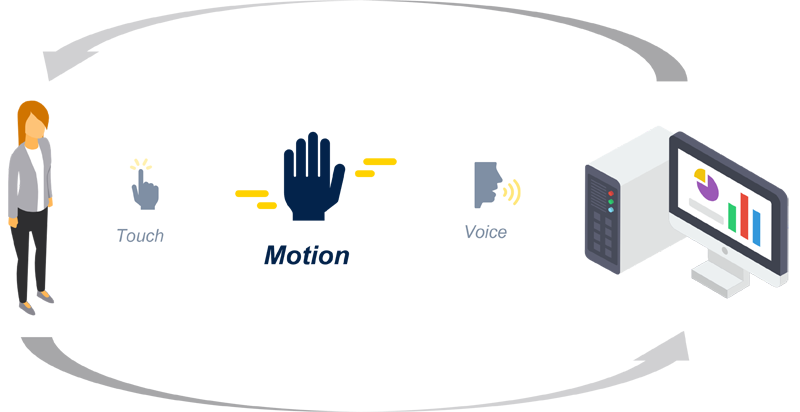

Figure 1: Typical methods of interacting with high-tech equipment

Some may remember the 2002 film Minority Report with Tom Cruise, where the main character uses special gloves to control an interface. Some years later, Tony Stark in Iron Man 2 manipulated and analyzed holographic renditions of various environments using hand gestures and voice commands. Many have dreamed about having the kind of control that these famous characters demonstrated.

Now, gesture recognition is starting to become reality using a range of new technologies developed by innovative companies. Many companies now propose solutions based on different technologies. Vision-based gesture solutions are common. However, a camera is required. Noting that people often try to cover their webcams on their laptops to protect their privacy, these vision-based systems can be quite intrusive. There are other approaches that involve less intrusive technologies like radar, ultrasound, and now Time-of-Flight (ToF).

Gesture recognition is part of the Human-Machine Interface (HMI) where the principle is to control a user interface directly by using human actions. In addition to hand gestures, other means of interaction include voice control using a microphone and touch control directly on a display or through capacitive sensing electrodes (figure 1). Various types of equipment can be controlled using any of these means, such as thermostats, vending machines, coffee machines, home appliances, and industrial robots, in addition to consumer electronics like PCs, smartphones, and televisions. Advanced HMI technologies are already permeating all these examples, generally using touchscreen or voice control. Soon, however, gesture recognition is expected to become widely used and will simplify users’ lives even more.

Why Gesture Recognition?

There are many ways gesture control can enhance the user experience (figure 2). The main way is in creating the most user-friendly GUI possible with no physical buttons, attractive visual themes, and a convenient and efficient user experience. Also, since gesture recognition lets users avoid touching controls, it can help overcome a challenge like implementing control of an oven or hob during cooking while hands are dirty. Similarly, gesture control can reduce reliance on using a remote controller to change television channels. Gesture could also enhance new features in daily life, improving the user experience and increasing customer satisfaction. As the technology matures, even more applications will likely become apparent.

Click image to enlarge

Figure 2: Gesture control can enhance safety and hygiene as well as easing user interaction

There is also a safety aspect to gesture control, which has become starkly apparent since the recent pandemic. Fear of transmission through various mechanisms including direct contact with contaminated surfaces, as well as closeness to infected people, drove governments effectively to halt the world economy. Even now, typical procedures for obtaining essential items like travel tickets from a vending machine involve touching the keypad or screen, compelling us to sanitize immediately afterwards for protection. Gesture recognition provides inherent protection by allowing touch-free control of the user interface. It saves time and makes travel safer.

Anyone who has played on a modern game console will have experienced motion detection to control their character in a game. The early versions of some of the most widely known consoles suffered from drawbacks such as the high cost of camera hardware and the powerful processing engine needed behind it. Other systems required players to hold a remote to detect motion. Gesture recognition now brings more fun and immediacy when controlling videogames or toys. It could also enhance the user experience of new games now arriving in the market with Augmented Reality and Virtual Reality (VR/AR) technology. Gesture fits perfectly with these, removing reliance on any handheld device and enabling immersive experiences.

Industrial safety can benefit, too. Physical switches and buttons that require workers to look away from the machine at critical moments can compromise production quality, human safety, or both. Gesture recognition permits better, safer applications by enabling workers to control the machine intuitively without looking at buttons.

Typical Gesture Recognition Technologies

Vision-based gesture recognitionsystems have several drawbacks in addition to the cost and power consumption associated with the camera and processing subsystem. Increasing accuracy requires greater camera resolution, with an associated increase in processor loading. In addition, the intrusiveness of the technology, mentioned earlier, is a major limitation. The camera must always be on to track the motion associated with any potential gesture. This could be problematic in a private space like a house or at work for security reasons. Moreover, depending on the processing engine’s capabilities, the data may be handled directly on the host. On the other hand, some Artificial Intelligence applications may require data to be sent to the cloud, such that the control of personal data is lost.

Other technologies typically used to recognize gesture motions include ultrasound. However, ambient noise can affect performance and the panel containing the sensor must have a hole for the microphone and the loudspeaker to work.

Click image to enlarge

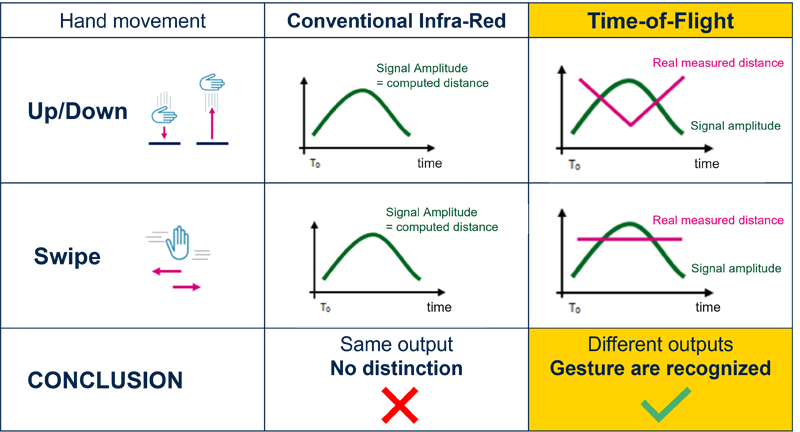

Figure 3: ToF sensors can extract movement and position information that ordinary infrared sensors cannot distinguish

Infrared sensors, on the other hand, can handle very basic motion detection but cannot distinguish between different gestures like tap and swipe, as figure 3 illustrates. Infrared is only suitable for controlling a smart button, but little else. It is also very sensitive to ambient light as well as the target color and reflectance. The sensor may fail to detect a very dark target.

A new technology capable of overcoming these issues has recently entered the gesture-recognition domain: Time-of-Flight (ToF) sensing.

Newcomer: Time-of-Flight Technology

The ToF principle works with a sensor that contains a light emitter and a receiver. The emitter transmits photons, and the system measures the time for them to return to the sensor. Based on the speed of light, as a constant, the system uses information from the emitter and receiver to calculate the distance between the target and the sensor.

ToF sensors are already used in many different applications, including wall tracking in mobile robots like vacuum cleaners and presence activation of instruments like digital home-heating thermostats or similar control panels. Some applications also use this technology for simple gesture recognition called “touchless button” or “smart switch”. These are typically used to turn on/off a light or to activate a smart faucet. With this technology, it is possible to distinguish a tap from a swipe.

Click image to enlarge

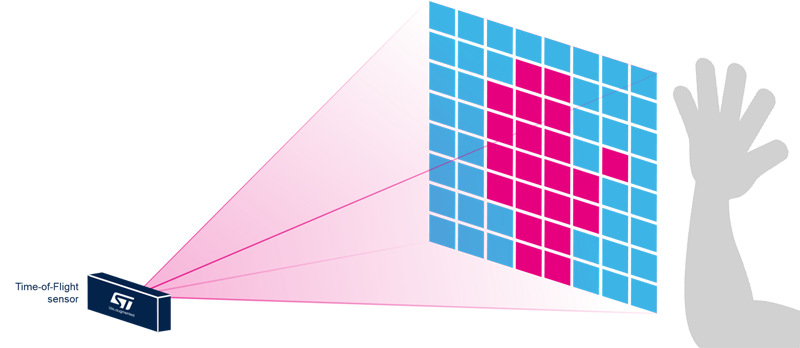

Figure 4: Multizone ToF sensors enhance scene-analysis capabilities

Now, companies like STMicroelectronics are taking things a step further with new ToF sensors that create multiple zones (figure 4) whereas previous ToF sensors supported only one zone. Compared to these older single-zone sensors, multizone sensing is a major step forward that permits new use-cases and new applications like complex and robust gesture recognition.

With an 8x8 array comprising 64-zones, and a wide field-of-view (generally >60°), ST’s multizone ToF sensors calculate the X/Y/Z coordinates of a moving object in real-time. This enables hand tracking and thus recognition of gestures like tapping, swiping, level control, and more. Combined with Artificial Intelligence, this multizone ToF technology can also be used to recognize hand posture and thus detect signs such as like, ok, and others.

Click image to enlarge

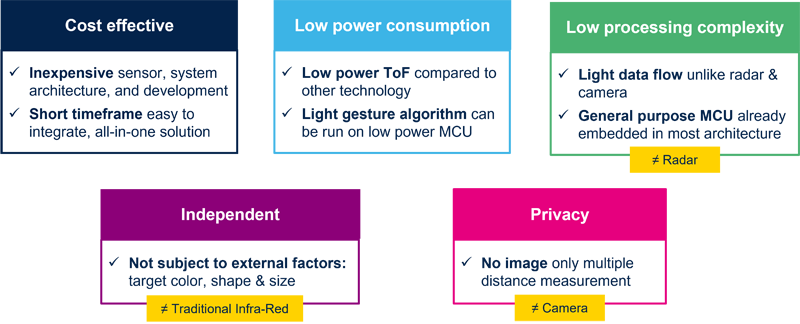

Figure 5: Multizone ToF sensors deliver multiple advantages at system and user level.

All these gesture motions, once detected by the sensor, can be used easily in any software application because the sensor is only providing the gesture motion output.

Privacy is amajor benefit of the ToF technology compared to vision-based sensing. Because ToF sensors provide only a depth map, there is no image. There is only a matrix of distances computed by the sensor to calculate the range and motion, which is all the host system needs to control a user interface. Another advantage is that ToF sensors work in low light and even in darkness because they have their own emitter that operates in the non-visible part of the spectrum (generally 940nm). Hence, gesture recognition can be achieved in environments that would be impossible for a vision-based system to operate. The ToF technology is also unaffected by the target color or reflectance, unlike infrared solutions. This allows gesture recognition to work well even if the user is wearing gloves or dark clothing. The low processing complexity is also a key point that makes it possible for all the processing to be done on the chip. Figure 5 summarizes the benefits.