Smarter Together: How Agentic AI and Cobots Are Transforming Industrial Operations

How agentic AI and cobots work together to transform industrial operations

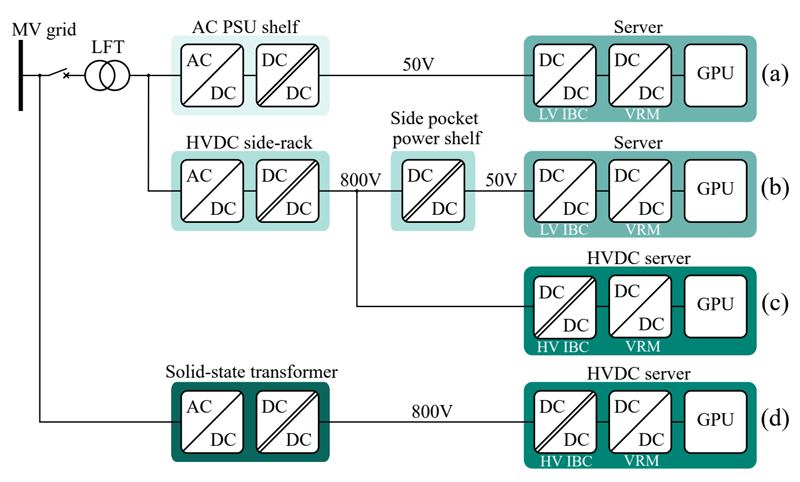

Figure 1: The Knowledge Ingestion Pipeline: How LLMs and VLMs absorb expertise from human video demonstrations and technical documents to generate robot task policies

Agentic AI provides the “brain” for autonomous decision-making, while cobots provide the physical presence—with the sensors, force limitations, and safety-by-design required to work alongside humans in complex operational environments. Together, they form a more capable system than either technology alone.

The Transition from 'Repeat' to 'Decide': LLMs, VLMs, and the Knowledge Absorption Problem

Traditional industrial robots were limited by rigid programming, requiring every movement to be manually coded. While effective for high-volume tasks, this approach was brittle; any change in part geometry forced a complete rewrite of the software.

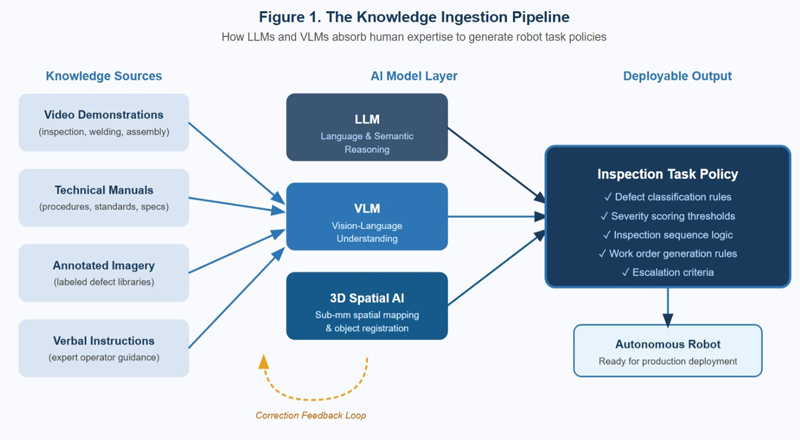

Modern systems use Large Language Models (LLMs) and Vision Language Models (VLMs) to shift the input from code to human-centric knowledge, such as video demonstrations and written manuals. For example, a VLM-equipped inspection system can learn to identify defective welds by watching training videos. Unlike conventional machine vision, which relies on fragile, hand-engineered templates, these models develop a generalized understanding that remains accurate despite changes in lighting, orientation, or surface finish. (See Figure 1.)

LLMs contribute a complementary capability: semantic reasoning over unstructured procedural text. A robot that can parse a maintenance manual can identify which defect categories are safety-critical versus cosmetic and update its operating procedure when a revised manual is issued, without any reprogramming. The combination of visual understanding and language-grounded reasoning creates a system capable of absorbing human knowledge and translating it into executable robotic behavior.

This architecture also enables incremental learning. When a human operator overrides an autonomous decision, that correction feeds back into the model as a labeled training example. Over time, the system's judgment converges toward the tacit expertise of the workforce, capturing knowledge that would otherwise retire with experienced inspectors.

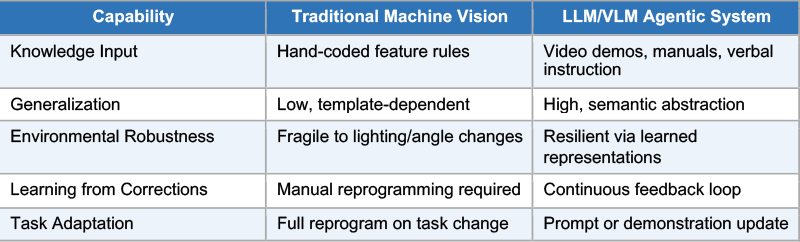

Table 1. Comparison of Traditional Rule-Based Vision vs. LLM/VLM-Enabled Agentic Inspection

Click image to enlarge

Table 1: Traditional rule-based machine vision versus LLM/VLM-enabled agentic inspection across five operational dimensions

The Autonomous Inspection Loop: From Defect Detection to Work Order Generation

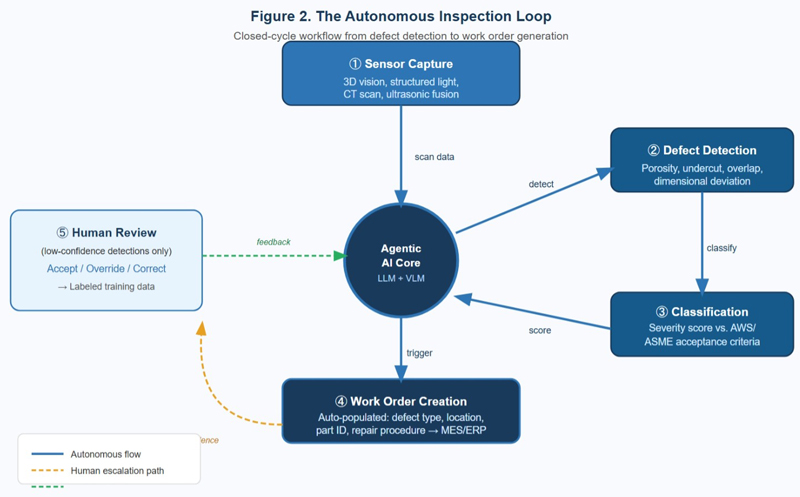

The highest-value application of agentic AI in manufacturing today is the autonomous inspection loop: a closed-cycle workflow in which a robot independently detects a defect, classifies its severity and type, determines the appropriate remediation, and generates the corresponding task order in the enterprise repair queue, all without human intervention.

This capability is particularly significant for three material processing categories where defect morphology is complex, variable, and consequential: welding, casting, and forging.

Weld inspection has historically been labor-intensive and inconsistent. Surface discontinuities, including porosity, undercut, overlap, and incomplete fusion, vary in geometry, location, and significance depending on the structural application. A VLM-equipped robotic system with a calibrated 3D spatial awareness layer can detect these features at sub-millimeter resolution, classify them against applicable acceptance criteria such as AWS D1.1 or ASME Section IX, and assign a severity score. If the defect exceeds threshold, the system autonomously creates a repair work order in the plant's MES or ERP system, populated with defect type, location coordinates, part ID, and recommended repair procedure. (See Figure 2.)

Click image to enlarge

Figure 2: The Autonomous Inspection Loop: A closed-cycle workflow showing how agentic AI moves from defect detection through classification, severity scoring, and automated work order generation in the repair queue

Casting inspection presents a different challenge: many critical defects are subsurface and invisible to optical systems. Agentic AI operates here in conjunction with CT scanning or ultrasonic testing hardware, fusing multi-modal sensor data, including surface imagery, volumetric scan, and dimensional measurement, into a unified defect model. The LLM reasoning layer then applies acceptance criteria from design specifications and flags components for review or rework.

For forged components, dimensional conformance is the primary objective. Agentic systems using structured light or laser profilometry compare as-forged geometry against the nominal CAD model in real time, identify out-of-tolerance regions, and reason about whether the deviation falls within the allowable rework envelope or constitutes a scrap condition. This distinction, previously requiring an experienced engineer, can now be made autonomously for the majority of standard deviation types.

The productivity implications are substantial. Autonomous inspection eliminates inspector fatigue and shift-to-shift variability, the two primary drivers of escaped defects in conventional quality control workflows.

Complementary by Design: Where Cobots Remain Essential

Agentic AI and cobots are not competing paradigms—they are complementary layers of a more capable system. This is not a transitional arrangement pending some future breakthrough; it reflects the distinct and durable strengths of each technology. In complex and dynamic operational environments, many of the highest-value use cases simply cannot be addressed by software intelligence alone.

This dynamic is clearly visible in industrial depot maintenance operations. Many of the use cases being addressed in those environments require the physical presence, sensor integration, and human-safe force limitations that cobots provide. Some cobots are specifically engineered with compliant actuators and proximity sensing to operate safely beside human technicians—a safety requirement no purely software-based agent can satisfy. Agentic AI provides the reasoning and decision layer; the cobot executes the physical action: handling, assembling, or inspecting parts in environments that are dynamic, constrained, and safety-critical.

The most important boundary today is high-complexity adaptive welding. Robotic systems can autonomously inspect welds with high fidelity. They cannot yet autonomously execute the real-time decisions experienced human welders make: adjusting arc energy in response to joint fit-up variation, compensating for part distortion mid-pass, or recognizing that a joint configuration requires a technique not in the training data. These decisions draw on embodied, perceptual expertise not yet reliably capturable by current VLM architectures.

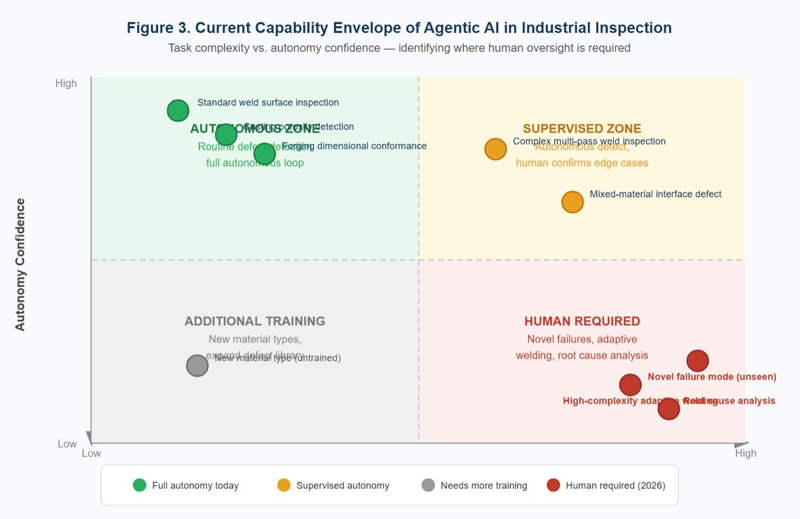

A second boundary is novel failure mode detection. When a defect type absent from training presents itself, a new material incompatibility or an unfamiliar failure pattern from a process change, the system may confidently misclassify it. This is the well-documented distribution shift problem in machine learning, and it is particularly hazardous in high-consequence inspection contexts. Current best practice requires human review of any result that falls in a low-confidence region of the model's output distribution. (See Figure 3.)

Click image to enlarge

Figure 3: Current Capability Envelope of Agentic AI in Industrial Inspection: A quadrant map plotting task complexity versus autonomy confidence, identifying the 'human-in-the-loop required' zone for novel defect types and high-complexity adaptive processes

Third, autonomous agentic systems currently lack robust causal reasoning about why a defect occurred. Identifying that porosity is present in a weld is within the system's capability. Determining whether it results from contaminated shielding gas, improper travel speed, or base metal chemistry requires integrating process data from multiple upstream systems, applying metallurgical reasoning that exceeds current model capability. Root cause analysis remains a human domain.

When will these gaps close? Multimodal models are improving at reasoning about physical processes. Digital twin integration is beginning to provide the upstream process context that root cause analysis requires. A reasonable working assumption for capital planning: the boundary between autonomous and human-required decisions will advance meaningfully in a two-to-four year horizon for inspection applications, while higher-order reasoning tasks will require human involvement considerably longer.

Strategic Implications for Manufacturers and Logistics Professionals

The appropriate response to this technological moment is to start with autonomous inspection. This is where the technology is most mature and the ROI is most quantifiable. Piloting agentic inspection on a single product family provides critical operational data and surfaces integration challenges without excessive risk.

Simultaneously, invest in data infrastructure. Agentic AI performance depends entirely on data quality. Manufacturers with digitized defect libraries and instrumented processes will scale significantly faster than those starting from an analog baseline.

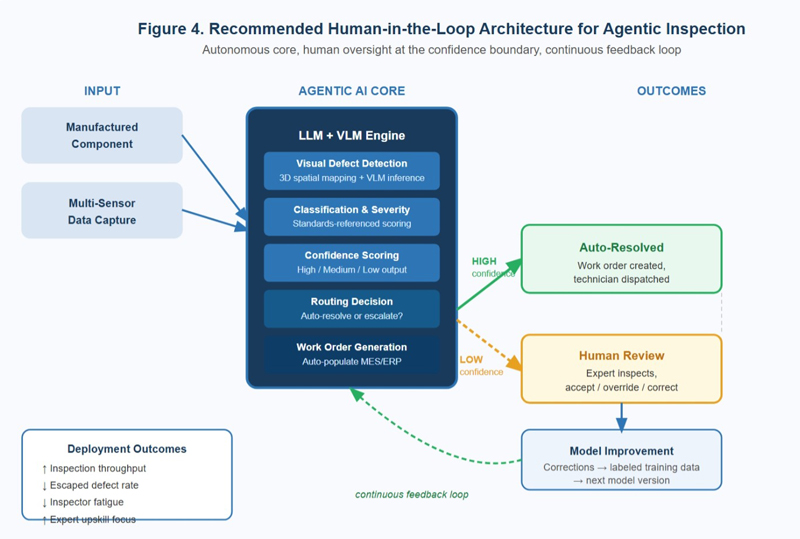

Design for human-in-the-loop at the boundaries. The goal is not to remove humans from the inspection process; it is to remove humans from the routine center so they can concentrate expertise on the uncertain edges. System design should route low-confidence detections to human review and create feedback mechanisms that turn those reviews into training data. (See Figure 4.)

Click image to enlarge

Figure 4: Recommended Human-in-the-Loop Architecture for Agentic Inspection Deployment: Showing autonomous processing of routine decisions, escalation pathways for edge cases, and the feedback loop that converts human corrections into model improvements

Finally, treat the technology as a capability-building platform, not a cost-reduction exercise. The manufacturers who will achieve durable competitive advantage from agentic AI are those who use it to redeploy human expertise upward, from executing inspections to designing inspection criteria, from performing repairs to engineering process improvements that reduce defect rates. The robot is not replacing the experienced inspector. It is freeing that inspector to do the work only humans can currently do.

Looking Ahead

The integration of agentic AI into industrial operations is not a distant future scenario—it is happening now, in inspection bays, quality control stations, and depot maintenance facilities around the world. And in most of these environments, it is happening alongside cobots, not instead of them. The autonomous inspection loop—detect, classify, remediate, document—is demonstrably mature. Organizations that deploy it thoughtfully in the next 12 to 24 months, with both the software intelligence and the physical robotic infrastructure it requires, will establish operational advantages that are difficult to replicate later.